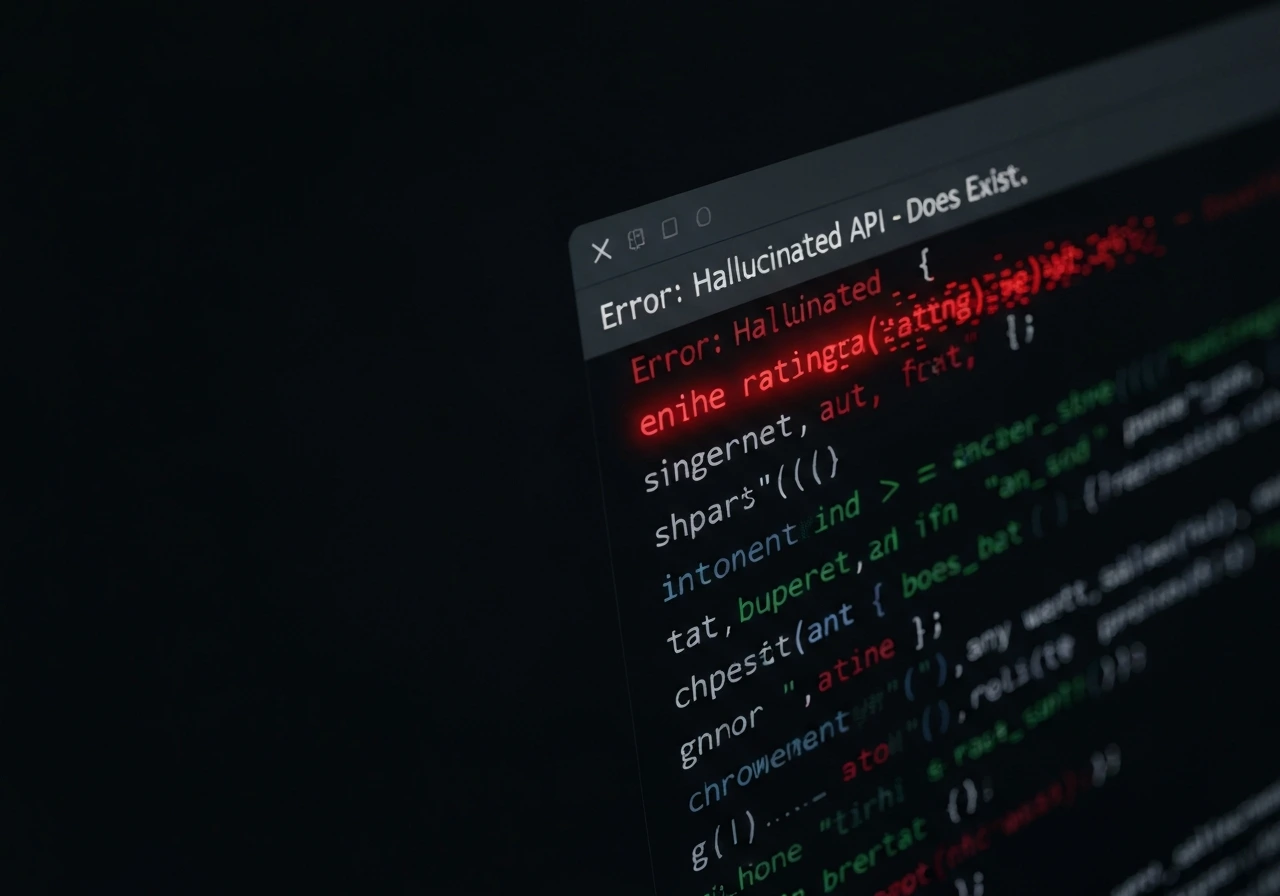

Code Hallucination Detection for AI Coding Assistants

We detect fabricated APIs, incorrect logic, deprecated methods, insecure patterns, and silently failing code generated by GitHub Copilot, Codex, and GPT-based developer tools. Our real-world repository workflow testing exposes hallucinations that only appear during long-session development and production-level integration.