Enterprise Code Reliability Testing for AI Coding Assistants

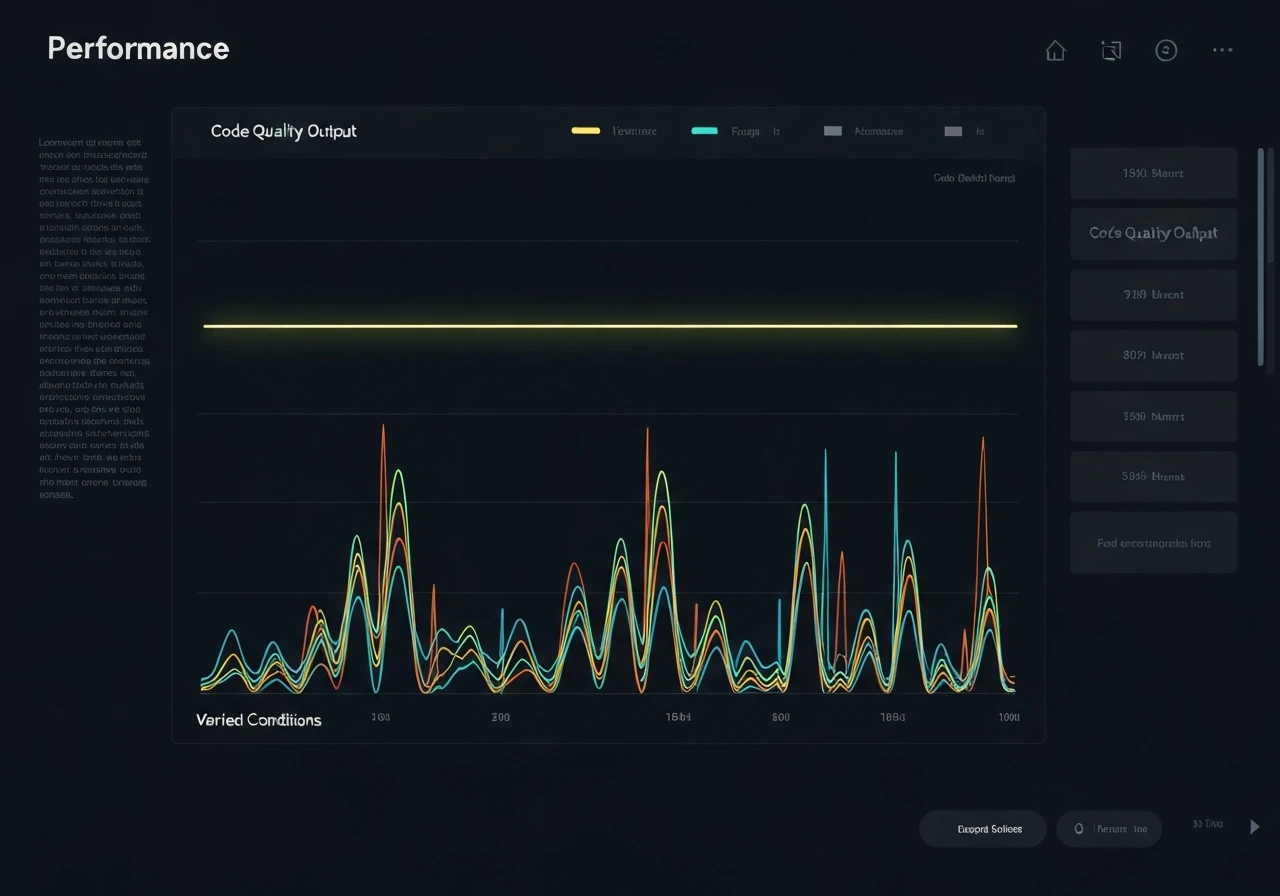

We evaluate GitHub Copilot, Codex, GPT-based coding assistants, and custom code LLMs to ensure stable, predictable, and production-ready behavior across real development workflows.

Code reliability is not just about correctness in isolated prompts. It requires consistency across repeated runs, long-session context retention, edge-case handling, and architectural coherence within full repositories.