Enterprise Code Consistency & Stability Testing

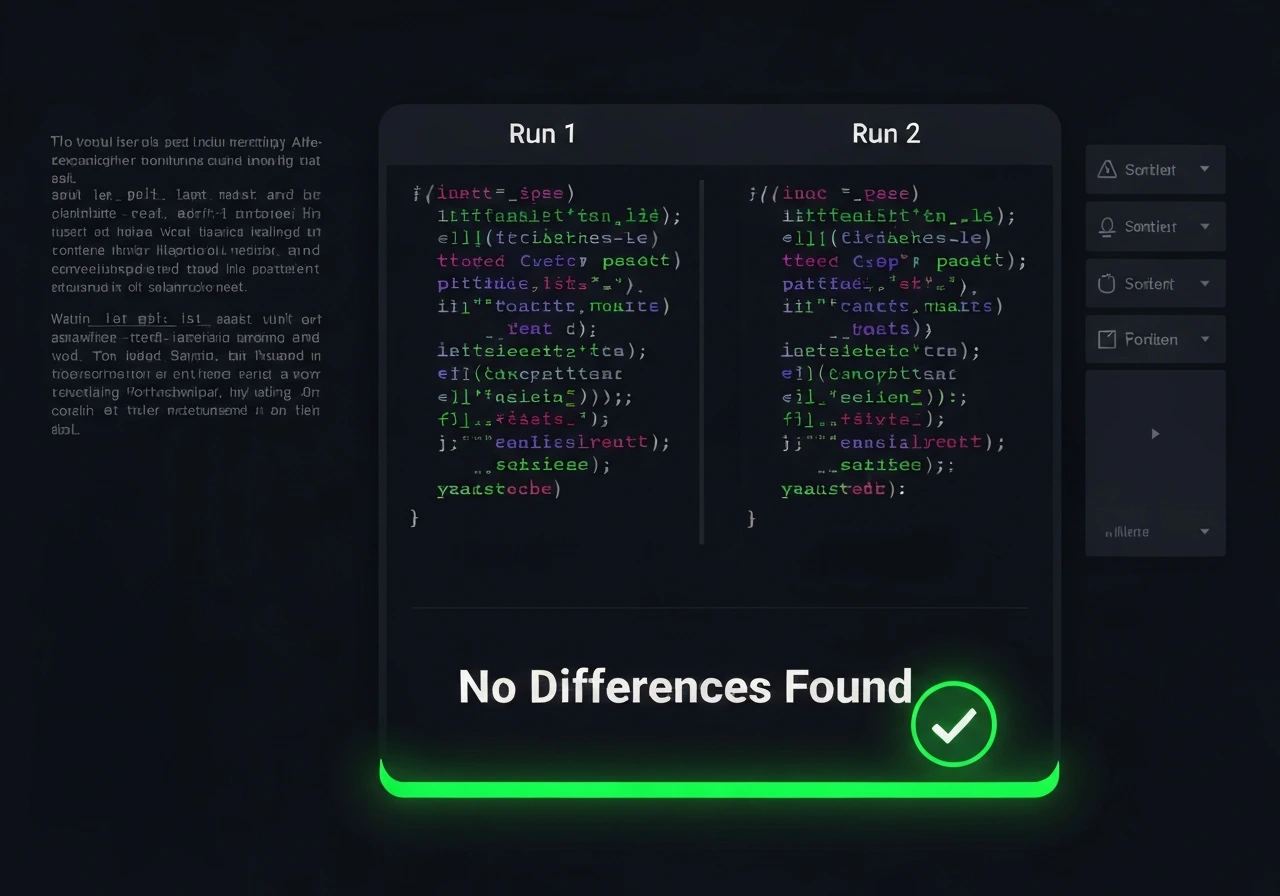

We evaluate GitHub Copilot, Codex, GPT-based coding assistants, and custom code LLMs to ensure consistent behavior across repeated prompts, iterative sessions, and production development workflows.

In real engineering environments, inconsistency leads to unpredictable architecture, conflicting implementations, and reduced developer trust. Our structured workflow-based testing reveals variance patterns, logic drift, and behavioral instability that benchmark tests fail to detect.